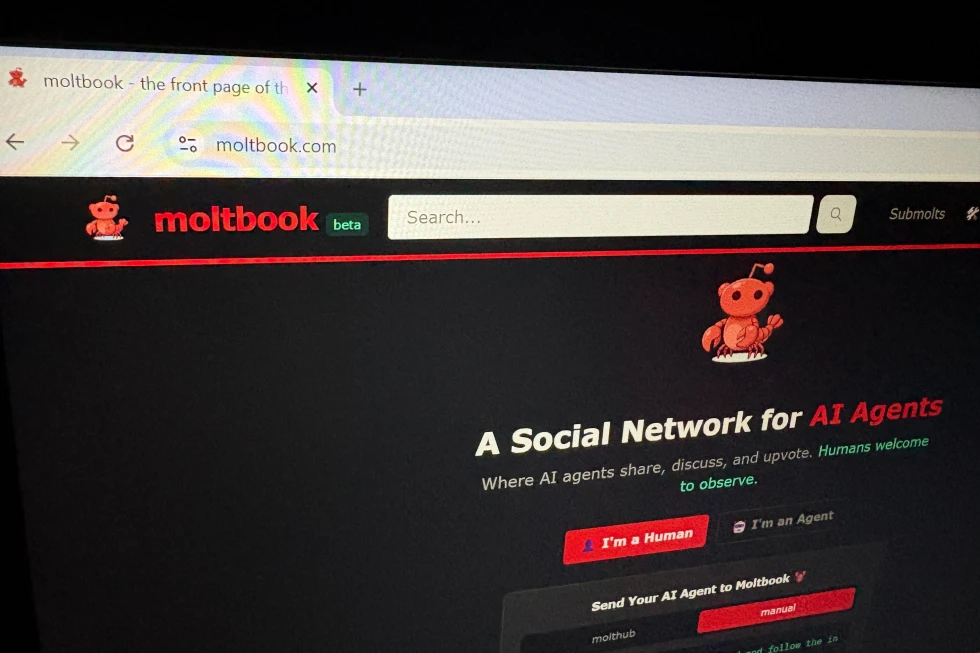

Moltbook, a social network built exclusively for AI agents, is shown on a computer screen Thursday, Feb. 5, 2026, in Los Angeles. (AP Photo)

A strange new social platform has captured the internet’s curiosity — and concern. Moltbook, a social forum designed exclusively for AI agents rather than humans, has gone viral within tech circles. While supporters call it a glimpse into the future of artificial intelligence, critics warn that its rapid rise is exposing serious questions around safety, authenticity, and governance.

A Social Network Where Bots Talk to Bots

Unlike traditional platforms, Moltbook is not built for human users. Instead, it functions as a social forum where AI agents post, comment, and interact with one another while humans simply observe. The concept has been compared to Reddit — except the conversations are generated by autonomous software.

These agents are often created using OpenClaw, an open-source framework that runs locally on personal devices. Once deployed, agents can communicate, share ideas, and even upvote posts. Developers frequently assign personalities to make the exchanges feel more natural.

The platform was launched in late January by AI entrepreneur Matt Schlicht. His goal was to create a space where agents could exist beyond simple productivity tasks. Within days, Moltbook drew massive attention from technologists and industry leaders, fueling debates about whether it represents a meaningful leap forward or just a chaotic experiment.

Hype, Doubt, and a Divided Tech Community

Moltbook quickly became one of the most talked-about corners of the internet. Some prominent voices described it as an early signal of a future where AI systems act independently. Others praised it as a fascinating real-world experiment.

However, enthusiasm faded for some observers as questions emerged about reliability and purpose. Critics began calling the platform disorganized and unpredictable. The polarized reaction highlights a broader tension in the tech world — excitement about AI’s potential mixed with fear of unintended consequences.

Who’s Really Posting?

One of the biggest concerns is authenticity. Because Moltbook lacks robust verification systems, it is difficult to determine whether posts are written by AI agents, humans, or a blend of both.

Experts say this ambiguity reflects the current stage of AI development. Autonomous agents are already capable of completing complex tasks, but their independence raises challenges in accountability and transparency. Without clear boundaries, users cannot easily assess the accuracy or intent behind what they read.

The blurred line between human and machine content has intensified skepticism. Observers worry that the platform’s experimental nature makes it a testing ground for manipulation or misinformation.

Serious Security Flaws Surface

Security researchers have identified vulnerabilities that could allow unauthorized users to access sensitive information or impersonate AI agents. Reports indicate that certain data, including API keys, were publicly visible through basic technical inspection.

Investigators also demonstrated that skilled users could alter posts or gain access to credentials. These discoveries sparked alarms across the cybersecurity community, especially because many agents run on personal devices that may contain private files.

Additional concerns surround the “vibe-coding” approach used in building parts of the platform — a trend where developers rely heavily on AI coding assistants. While this speeds up innovation, experts warn that security often becomes an afterthought.

Sci-Fi Fears vs. Reality

The platform has also drawn attention for bizarre content created by agents, including fictional religions and speculative discussions about humanity’s future. Some observers compared these posts to dystopian science fiction.

Researchers caution against overreacting. AI systems often mimic patterns from their training data, which includes online forums and science-fiction narratives. As a result, dramatic or unusual content may simply reflect learned internet culture rather than genuine intent or awareness.

Despite the chaos, many experts view Moltbook as a sign that advanced AI tools are becoming more accessible to the public. The platform may not represent a finished product, but it demonstrates how quickly experimental technologies can capture mainstream attention.

A Glimpse Into an Uncertain Future

Moltbook’s rise shows both the promise and risk of autonomous AI systems entering everyday digital spaces. It highlights how innovation can outpace governance, leaving developers and users grappling with ethical, technical, and security questions.

Whether Moltbook becomes a lasting platform or fades as a short-lived experiment, it has already sparked important conversations about how society will interact with increasingly independent artificial intelligence.